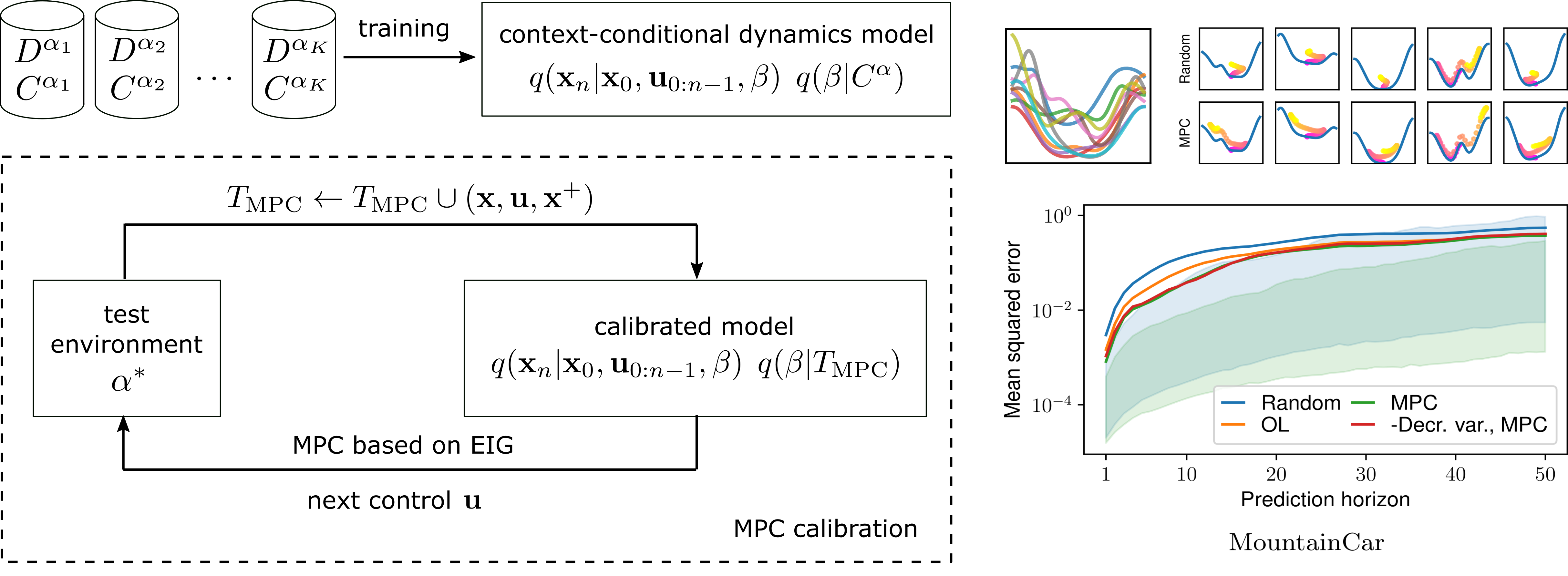

Learning of families of dynamics models which are conditioned on context variables $\beta$ in Explore-the-Context [ ]. The models can be used for system identification using model-predictive control (MPC) with an expected information gain based objective.

Complex everyday environments pose a challenge to the implementation of autonomous robots that purposefully act in these environments. A classical approach for developing autonomous robots is to hand-craft object and environment models that are used for control and planning. These models, however, are typically limited by the implicit assumptions the engineers make about the properties and functioning of the environment, such that the designed system might not generalize to unseen tasks and situations. In this project, we aim to overcome these limitations by enabling agents to learn dynamics models from observations and interaction experience for scene understanding, control and planning.

In Deep Gaussian Latent Process Dynamics (DLGPD [ ]) we develop a novel method which encodes pairs of images of a simulated inverted pendulum into latent states using deep learning and learns action-conditional transition and reward models as Gaussian Processes on the latent states. A CNN decodes back the latent states into images for self-supervised learning. The hyperparameters of the GPs and the parameters of the neural network are trained jointly. The approach achieves more sample-efficient transfer learning to modified environments (different pendulum mass, inverted sign of the applied torques) than a purely deep learning based approach, since the GPs can be conditioned on new transition examples in the modified environments.

In Explore-the-Context (EtC [ ]) we presented a novel method for dynamics model learning which learns a whole family of dynamical systems. This can be useful for environments in which a range of hidden parameters can vary and determine the dynamics model. We learn a probabilistic neural model which encodes a set of example transitions in the environment into a context variable. The probabilistic dynamics model is conditioned on this context variable. We demonstrate that the probabilistic model can be used for planning actions using model-predictive control with expected information gain as objective. In this way, the agent chooses efficient actions to identify the system. Once the context variable is identified, the trained models can also be used for model-predictive control to achieve task goals.